Lasso Research

Explore novel threats, develop layered defenses, and share discoveries that help the AI community build safer systems.

View Publications

Lasso Research Core Values

AI Pioneering

Building and sharing knowledge that shapes how ML engineers develop and secure AI systems is fundamental to responsible innovation. This principle motivates us to explore novel architectures, contribute methodologies for safe AI deployment, and publish findings that advance the broader conversation.

Intent Alignment

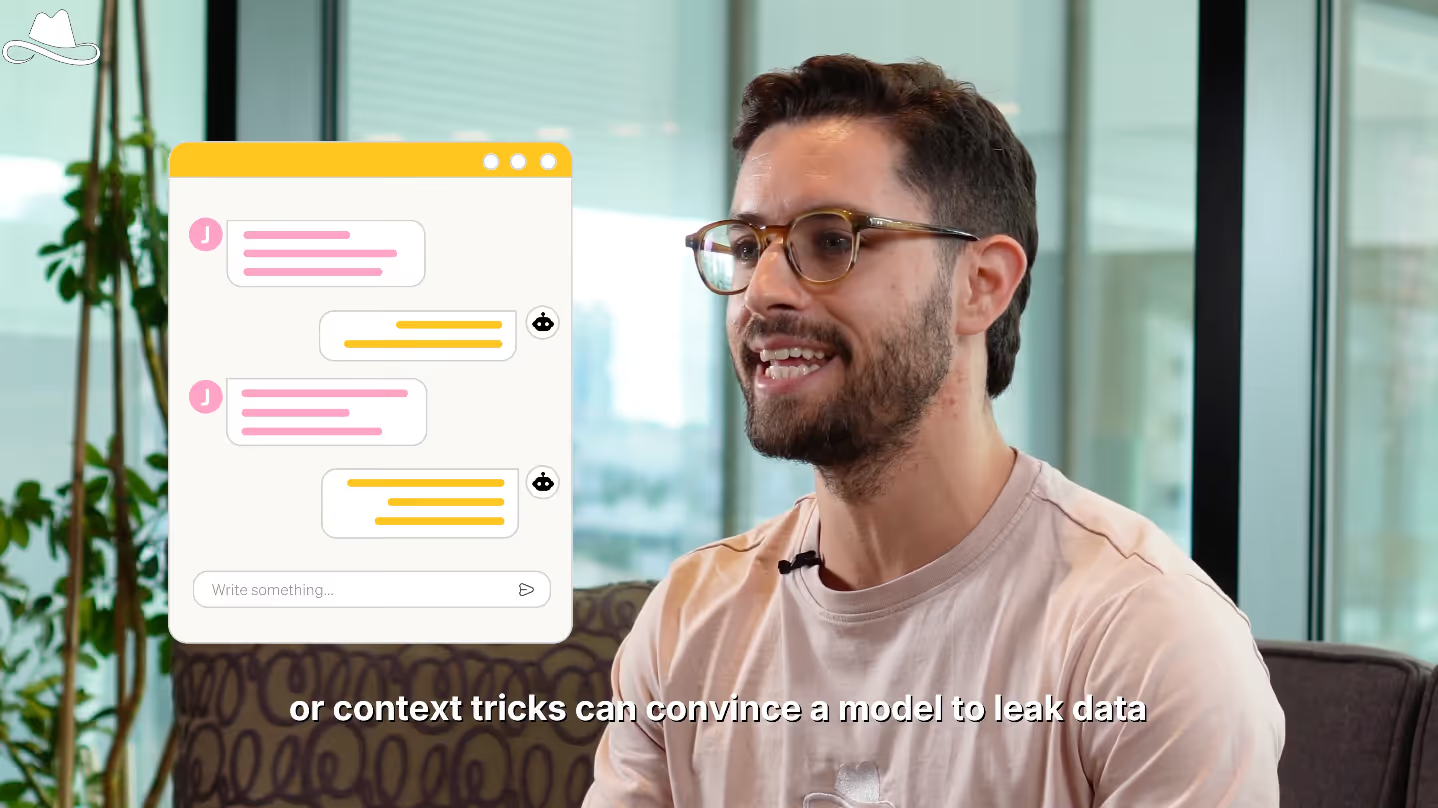

Understanding how to preserve authentic intent in the face of adversarial pressure is core to making AI trustworthy in high-stakes environments. This principle drives our investigation into detecting manipulation, distinguishing legitimate requests from malicious ones, and maintaining the integrity of human-AI communication.

Red Teaming

Validating security claims through systematic, reproducible evidence is essential to building trustworthy defenses. This principle grounds our work in measurable outcomes through comprehensive test suites, clear metrics, and transparent reporting of what works and what doesn't.

Anticipatory Defense

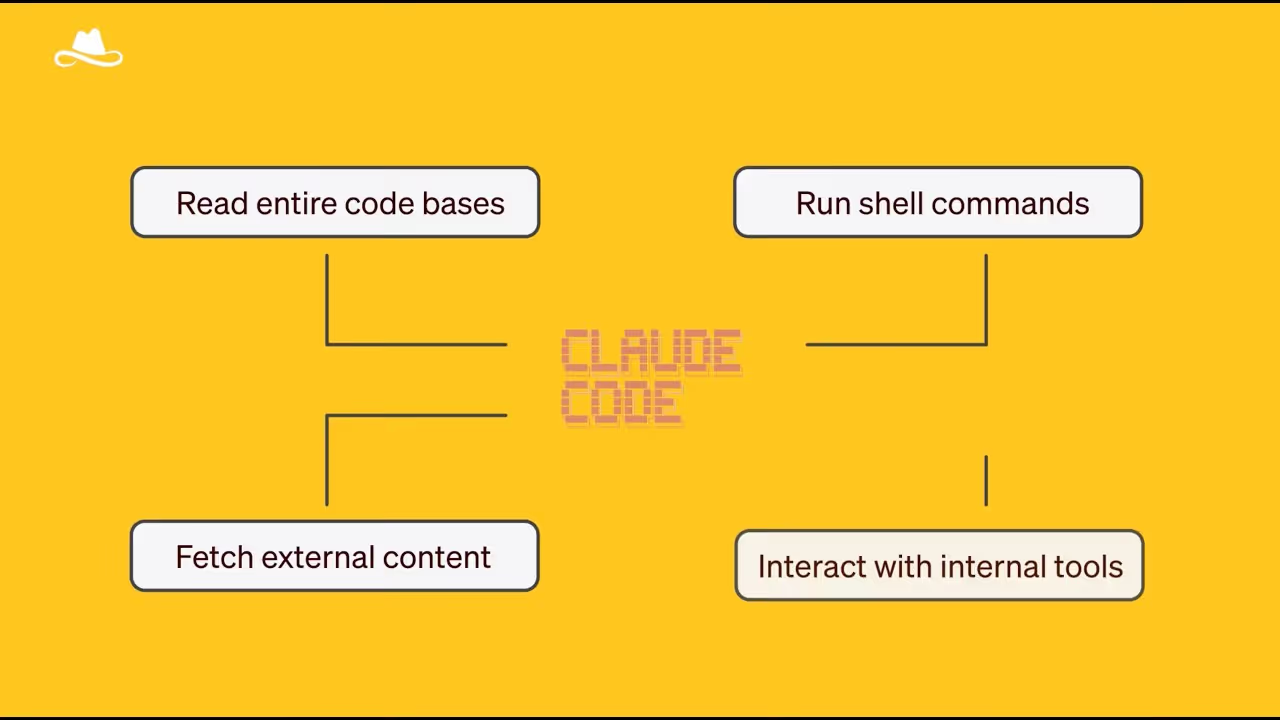

Driving protection against both known and unknown AI threats requires systematically exploring emerging attack surfaces, investigating zero-day vulnerabilities before they're exploited, and stress-testing security assumptions in novel AI capabilities. When we discover new threats, we share them openly so the community can defend collectively.

Publications

Research

Join the Research team

%20Medium.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)